The week was another potpourri of convection initiation challenges ranging from evening convection in WY/SD/ND/NE to afternoon in PA/NY back over to OK/TX/KS for a few days. We encountered many similar events as we had the previous week struggling with timing of the onset of convection. But we consistently can place good categorical outlooks over the region, and have consistently anticipated the correct location of first storms. I think the current perception is that we identify the mechanisms and thus the episodes of convection, but timing the features remains a big challenge. The models tend to not be consistent (at least in the aggregate) for at least two reasons: There is no weather event that is identical to any other, and the process by which CI occurs can vary considerably.

The processes that can lead to CI were discussed on Friday and include:

1. a sufficient lifting mechanism (e.g. a boundary),

2. sufficient instability in the column (e.g. CAPE),

3. instability that can be quickly realized (e.g. low level CAPE or weak CIN or low LCL or small LFC relative to the LCL),

4. a deep moist layer (e.g. reduced dry air entrainment),

5. a weakening cap (e.g. cooling aloft).

That is quite a few ingredients to consider quickly. Any errors in the models then can be amplified to either promote or hinder CI. In the last 2 weeks, we had at least similar simulations along the dryline in OK/TX where the models produced storms where none were observed. Only a few storms were produced by the model that were longer lasting, but the model also produced what we have called CI failure: where storms initiate but do not last very long. Using this information we can quickly assess that it was difficult for the model to produce storms in the aggregate. How we use this information remains a challenge, because storms were produced. It is quite difficult to verify the processes we are seeing in the model and thus either develop confidence in them or determine that the model is just prolific in developing some of these features.

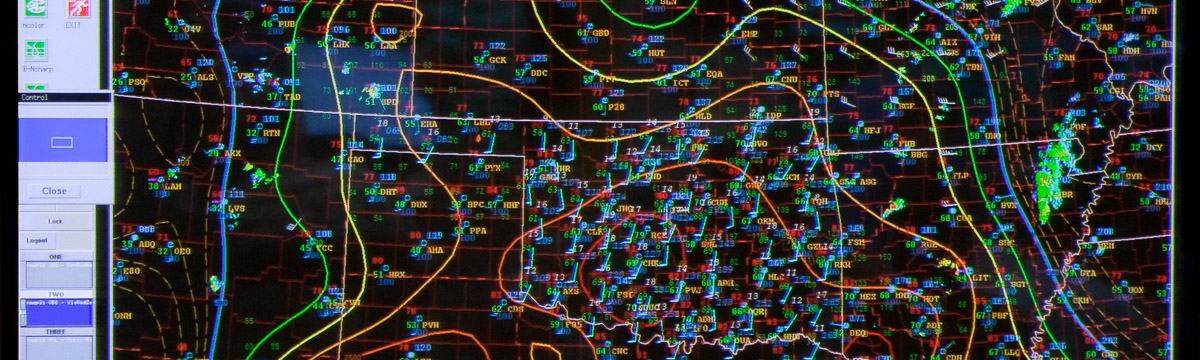

What is becoming quite clear, is that we need far more output fields to adequately scrutinize the models. However, given the self imposed time constraints, we need a data visualization system that can handle lots of variables, perform calculations on the fly, and deal with many ensemble members. We have been introduced to the ALPS system from GSD and it seems to be up to the challenge for the rapid visualization and the unique display capabilities for which it was designed (e.g. large ensembles).

We also saw more of what the DTC is offering in terms of traditional verification, object based verification, and neighborhood object based verification. There is just so much to look at it, that it is overwhelming day to day. I hope to look through this in the post experiment analysis in great detail. There is alot of information buried in that data that is very useful (e.g. day to day) and will be useful (e.g. aggregate statistics). This is truly a good component of the experiment, but there is much work to be done to make it immediately relevant to forecasting, even though the traditional impact is post experiment. Helping every component fill an immediate niche is always a challenge. And that is what experiments are for: identifying challenges and finding creative ways to help forecasting efforts.