Though we do lots of forecasting in the Spring Forecasting Experiment, we also evaluate cutting-edge numerical weather prediction. This year, we’re looking at many iterations of the Finite Volume Cubed model, or FV3, which is slated to eventually replace the GFS as the next generation global prediction system. This year, we have versions of FV3 provided by the Geophysical Fluid Dynamics Laboratory (GFDL; this is where FV3 was developed), the National Severe Storms Laboratory (NSSL), and the Center for the Analysis and Prediction of Storms (CAPS). GFDL and NSSL are each providing one member, while CAPS is providing an ensemble of eleven members that use different microphysics schemes and different planetary boundary layer (PBL) schemes. These different configurations can help illuminate the behavior of this new model core with multiple sets of physical parameterizations, grid spacings and convolutions, and FV3 versions. For more details, see the Operations Plan.

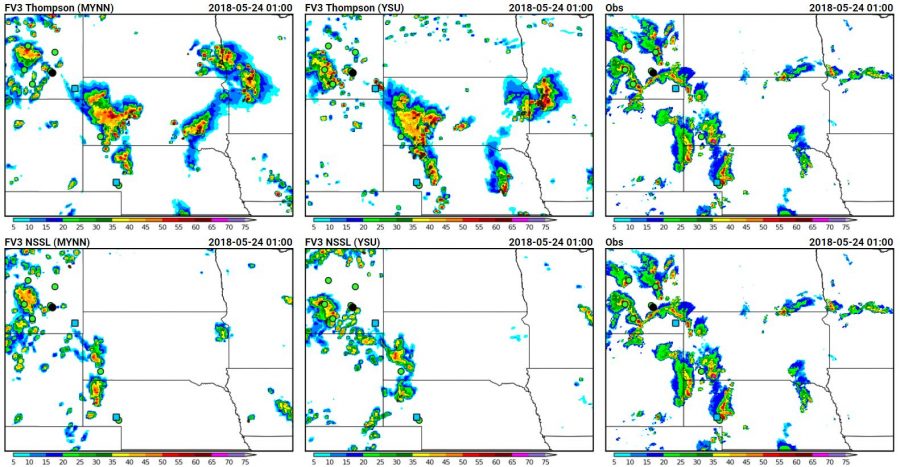

Last Wednesday, May 23rd, provided one of our most interesting case studies for the different versions of CAPS FV3, with differences in the storm structure, location of precipitation, and thermodynamic environment between the different microphysics and the different PBL schemes. The below panels show the reflectivity fields for CAPS FV3 members with four different PBL schemes, all of which use the Thompson microphysics.

Clearly, there are large differences between the storm structure and intensity, though all members seem to highlight two areas of convection, as were seen in reality. However, most of the members have more intense convection across South Dakota thank actually occurred, and overdid the intensity of the convection in the eastern half of the domain. The only member that doesn’t have overely strong convection on the eastern South Dakota/Nebraska border is the SA-MYNN member, which stands for the Scale-Aware MYNN scheme. All schemes also have the western area of convection too far east, as there were storms at 0100 UTC on the Wyoming/Nebraska border. Participants commented on nearly all of these aspects, with one commenting that “…after being the most accurate member early, the MYNN is a bit too overactive across eastern SD early in the day and maintains intensity across MT too long. The YSU members are a bit overdone during the event, though post-severe convection was handled quite well.”

Clearly, there are large differences between the storm structure and intensity, though all members seem to highlight two areas of convection, as were seen in reality. However, most of the members have more intense convection across South Dakota thank actually occurred, and overdid the intensity of the convection in the eastern half of the domain. The only member that doesn’t have overely strong convection on the eastern South Dakota/Nebraska border is the SA-MYNN member, which stands for the Scale-Aware MYNN scheme. All schemes also have the western area of convection too far east, as there were storms at 0100 UTC on the Wyoming/Nebraska border. Participants commented on nearly all of these aspects, with one commenting that “…after being the most accurate member early, the MYNN is a bit too overactive across eastern SD early in the day and maintains intensity across MT too long. The YSU members are a bit overdone during the event, though post-severe convection was handled quite well.”

Larger differences were apparent comparing the microphysics, which more often shows larger differences in reflectivity compared to the different PBL schemes. The NSSL microphysics (shown in the bottom row of the above figure) were much less active than the Thompson microphysics (the top row) across the domain, having almost no convection in the eastern half of the domain by 0100 UTC. They also had smaller and less intense regions of stratiform precipitation in the western storms. In this portion of the evaluation, we ask participants to compare the NSSL and the Thompson microphysics in terms of reflectivity intensity, reflectivity and UH location, and storm structure. Most participants liked the storm structure of the Thompson members better than that of the NSSL members, but liked the magnitude of the reflectivity in the Thompson members better. As one participant said, “For this event, I would have preferred using the Thompson members because you can mentally adjust for reflectivity magnitudes, but you can’t mentally manufacture storms that were nonexistent in the NSSL members.” By breaking out different characteristics of the convection, we hope to understand how different aspects of the model performance differ.

Larger differences were apparent comparing the microphysics, which more often shows larger differences in reflectivity compared to the different PBL schemes. The NSSL microphysics (shown in the bottom row of the above figure) were much less active than the Thompson microphysics (the top row) across the domain, having almost no convection in the eastern half of the domain by 0100 UTC. They also had smaller and less intense regions of stratiform precipitation in the western storms. In this portion of the evaluation, we ask participants to compare the NSSL and the Thompson microphysics in terms of reflectivity intensity, reflectivity and UH location, and storm structure. Most participants liked the storm structure of the Thompson members better than that of the NSSL members, but liked the magnitude of the reflectivity in the Thompson members better. As one participant said, “For this event, I would have preferred using the Thompson members because you can mentally adjust for reflectivity magnitudes, but you can’t mentally manufacture storms that were nonexistent in the NSSL members.” By breaking out different characteristics of the convection, we hope to understand how different aspects of the model performance differ.

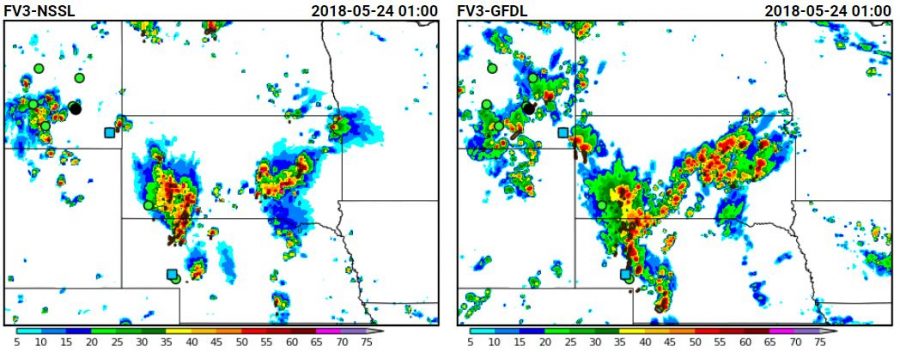

The GFDL and NSSL FV3 each provided another solution for the convection. The GFDL version had extremely high reflectivity, even compared to the CAPS Thompson members, with convection stregtching across most of South Dakota. The NSSL FV3 showed two distinct areas of convection, but underdid the storms in Montana compared to the other versions.

These evaluations are showing how much work there is to be done after the experiment; with all of these differences between FV3 configurations, the SFE activities will help the development of FV3 by giving feedback on its performance at convective scales. But, as is evident from this one-hour snapshot, the answer of which model performs best is far from straightforward – there are many aspects of the model to consider! Luckily, our participants have been giving us great feedback as to which aspects to target first.