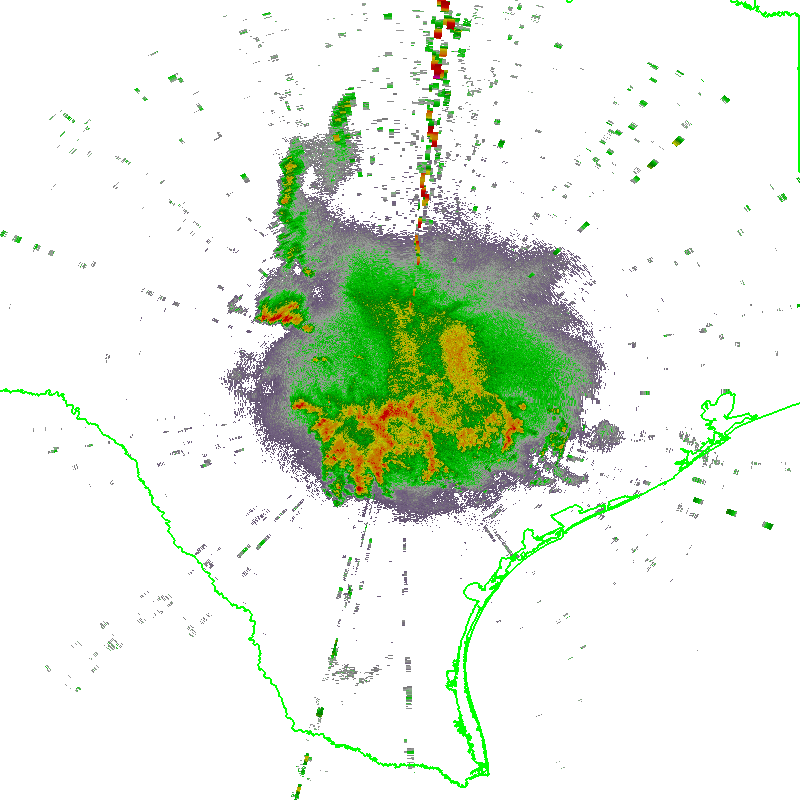

The implementation of the Supplemental Adaptive Intra-Volume Low-Level Scan (SAILS) for the 88D radars presented a problem for the WDSS-II ingestor ldm2netcdf because it relied on VCP definitions stored in XML configuration files. Those XML files defined which elevations matched up with each tilt. However, SAILS can insert a supplemental 0.5 degree scan into the existing VCP at any time. With no changes, ldm2netcdf would incorrectly label the new 0.5 tilt as the next expected tilt as defined in its VCP XML file.

To solve this problem, ldm2netcdf now processes Message 5 in the Level-II data stream (the RDA Volume Coverage Data) to map each incoming tilt to the correct elevation. The new 0.5 elevations get correctly labeled and saved just like any other 0.5 tilt.

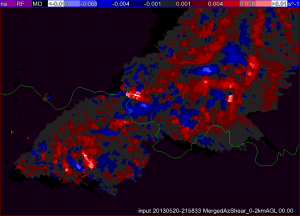

Algorithms listening to 0.5 elevations will be notified of these new tilts just like normal. Algorithms that listen to all tilts will insert them into the constantly updating virtual volume as the latest 0.5-degree tilt of data for that elevation. So, with the change to ldm2netcdf, downstream algorithms such as w2qcnndp, w2vil, w2merger, etc. deal with the SAILS tilt transparently.

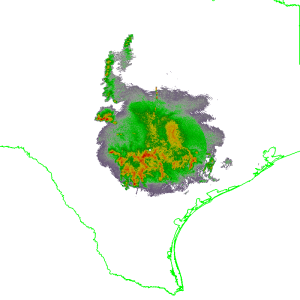

If you do not want the SAILS elevation to be inserted into the data stream, you can specify the ‘-e’ option on the command line of ldm2netcdf to separate out the extra SAILS tilts. The SAILS tilts will then be saved into a separate directory, such as Reflectivity_SAILS, or AliasedVelocity_SAILS. We do not recommend this, as you are essentially throwing away the extra information.

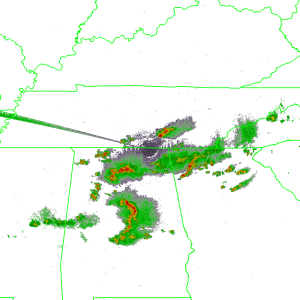

Finally, we took this opportunity to eliminate some outdated command line options in ldm2netcdf. First, is the ‘-D’ option for dealiasing. The dealiasing code in ldm2netcdf is very old and the dealias2d command provides much better results. Second, the ‘-c’ for compositing will be removed since w2vil does a much better job creating composites.

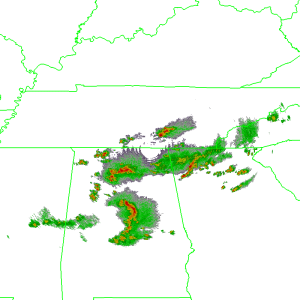

The new changes are being tested and will be rolled out when all the kinks are worked out.